Security Checklists · May 2, 2026

Top 10 Security Risks in AI-Driven Visa Applications and How to Mitigate Them

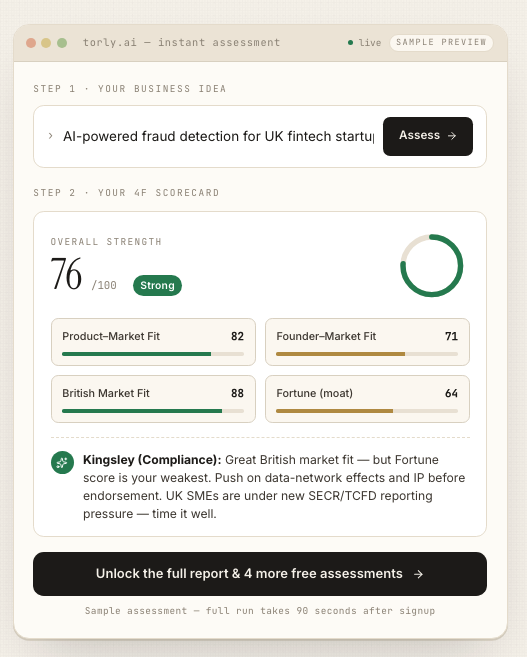

Explore the top 10 security vulnerabilities in AI-assisted visa applications and learn how Torly.ai implements robust safeguards to protect your data.

Introduction: Battling LLM Vulnerability in Visa Automation

AI-driven visa systems promise speed and precision, but they also introduce a fresh set of risks. At the heart of many solutions lies a large language model, and this brings a critical challenge: LLM vulnerability. In this article, we dissect the top 10 security hazards that can undermine AI-assisted visa applications and offer practical ways to plug those gaps.

Whether you’re an immigration professional or an entrepreneur eyeing the Innovator Founder Visa, it’s crucial to grasp these pitfalls. Our guide shows how Torly.ai’s AI-Powered UK Innovator Visa Application Assistant guards against each threat, turning complexity into confidence. Ready to shore up your defences? Mitigate LLM vulnerability with our AI-Powered UK Innovator Visa Application Assistant

Understanding LLM Vulnerabilities in Visa Applications

Visa workflows rely on intelligent prompts, document analysis and background checks. Each automated step leans heavily on the LLM’s output. Yet every automation layer can be targeted, from malicious inputs to data leaks. That’s where LLM vulnerability stares you in the face: a crafty attacker or an unforeseen bug might derail your entire application pipeline.

Mitigation starts with awareness. You need to spot where the AI could misinterpret instructions or expose personal data. Torly.ai’s platform embeds continuous checks, so you get early warnings before an error goes live. In the next sections, we unpack each risk, explain real-world impacts and suggest robust safeguards.

Top 10 Security Risks and Mitigations

1. Prompt Injection

Risk: Attackers craft inputs that manipulate the LLM into revealing sensitive details or executing unintended commands. In visa apps, this could mean leaked personal data or forged document templates.

Impact: Personal information exposure, compliance breaches, reputational loss.

Mitigation:

– Sanitize and validate every user prompt.

– Enforce a strict input schema.

– Use prompt-sanitisation libraries to strip dangerous tokens.

Torly.ai integrates built-in filters to neutralise injection attempts before they reach the model.

2. Insecure Output Handling

Risk: Failing to validate AI outputs can lead to flawed document generation—like incorrect visa conditions or missing declarations. This is a prime LLM vulnerability.

Impact: Faulty applications, rejected visas, legal liability.

Mitigation:

– Implement output validation routines (e.g. schema checks).

– Flag anomalous responses automatically.

– Introduce human-in-the-loop reviews for critical fields.

3. Training Data Poisoning

Risk: Malicious edits in the training corpus produce skewed reasoning—say, misidentifying required evidence for endorsement. The AI then misguides applicants.

Impact: Weak applications, wasted fees, poor user trust.

Mitigation:

– Secure your data pipeline with checksums.

– Monitor training data sources for anomalies.

– Retrain only on verified datasets.

4. Model Denial of Service

Risk: Flooding the LLM with resource-heavy requests slows or blocks visa services, a subtle but potent LLM vulnerability.

Impact: Downtime, user frustration, cost spikes.

Mitigation:

– Rate-limit API calls.

– Set resource quotas per session.

– Use queuing systems to smooth peaks.

5. Supply Chain Vulnerabilities

Risk: Relies on third-party plugins or libraries that may compromise integrity—imagine a compromised OCR connector leaking passport scans.

Impact: Data breaches, compliance failures.

Mitigation:

– Vet all dependencies rigorously.

– Employ software bills of materials (SBOMs).

– Update and patch third-party modules regularly.

Build your Business Plan NOW to ensure your AI infrastructure is sound.

6. Sensitive Information Disclosure

Risk: LLMs may inadvertently include private data—names, addresses or financial info—in outputs. A clear LLM vulnerability.

Impact: GDPR violations, loss of applicant trust.

Mitigation:

– Redact PII dynamically.

– Use differential privacy in training.

– Perform automated scans for leaked data.

7. Insecure Plugin Design

Risk: Plugins processing untrusted inputs without proper access control invite exploits like remote code execution.

Impact: Full system compromise, stolen credentials.

Mitigation:

– Enforce strict sandboxing.

– Validate plugin inputs and outputs.

– Limit plugin permissions to the bare minimum.

TorlyAI BP Builder APP helps you assemble a robust endorsement package with safe integrations.

8. Excessive Agency

Risk: Granting the LLM unchecked autonomy—like auto-submitting forms—can trigger unintended actions, a core LLM vulnerability.

Impact: Mistaken submissions, policy breaches.

Mitigation:

– Require manual approval before any critical step.

– Log every decision point.

– Implement “safe mode” defaults.

9. Overreliance

Risk: Blindly trusting AI outputs without critical review leads to flawed decisions. Overreliance on LLMs itself is a vulnerability.

Impact: Poor case outcomes, legal risk.

Mitigation:

– Introduce multi-agent cross-checks.

– Keep a compliance expert in the loop.

– Use transparent scoring metrics.

10. Model Theft

Risk: Unauthorized access to proprietary visa-specific LLMs exposes trade secrets and competitive advantage.

Impact: IP loss, black-market distribution.

Mitigation:

– Secure models behind authenticated APIs.

– Watermark outputs.

– Monitor and audit access logs.

In each of these cases, Torly.ai’s platform combines real-time monitoring, encrypted data flows and expert rulesets to shield your application from LLM vulnerability points.

Building an End-to-End Defence Strategy

A foolproof security posture demands more than point fixes. You need a layered approach:

– Pre-deployment testing for injection, output anomalies and poisoning.

– Runtime monitoring for DoS attacks and data leaks.

– Continuous iteration informed by real-world feedback.

By following OWASP’s GenAI Security Project guidelines and leveraging Torly.ai’s AI-Powered UK Innovator Visa Application Assistant, you tie together all the threads—keeping your visa pipeline watertight.

Testimonials

“We were sceptical about automating our Innovator Visa prep, but Torly.ai’s 24/7 support caught prompt injection and output issues we never saw. Our approval rate jumped to 95%.”

— Sarah Gupta, Startup Founder

“The tailored gap analysis and dynamic scoring meant we submitted a rock-solid business plan in under 48 hours. No more back-and-forth with solicitors.”

— Marcus Lee, Tech Entrepreneur

“Integrating Torly.ai with our compliance checks gave us peace of mind. We’ve never had a data leak or downtime since.”

— Priya Raman, Immigration Consultant

Conclusion: Fortify Against LLM Vulnerability

AI-driven visa applications can revolutionise your process, but only if you guard against LLM vulnerability at every stage. From prompt hygiene to model theft prevention, the threats are real—yet entirely manageable. Torly.ai’s AI-Powered UK Innovator Visa Application Assistant delivers a turnkey defence, blending automated safeguards with expert oversight.

Don’t leave security to chance. Fortify your process against LLM vulnerability with our AI-Powered UK Innovator Visa Application Assistant